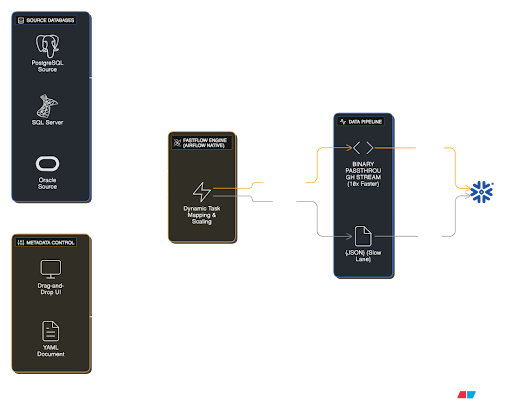

🚀 Airflow Native & High Performance

Turn Airflow into a No-Code ETL Studio.

End the toil of code duplication in data engineering. Reduce hours of pipeline development to minutes with metadata-driven architecture. Move data at Binary speed, not JSON slowness.

Compatible Tech Stack:

10x Speed

Binary Streaming

Auto-DAG

Dynamic Scaling